I Built an AI That Reviews AWS Architecture Diagrams Against the Well-Architected Framework

Upload a diagram. Get a structured review across all six pillars in under 30 seconds — no AWS consultant required.

romanceresnak/aws-architecture-reviewer

Clone, enable Bedrock model access, run make deploy. Your architecture gets its first reviewer in under 5 minutes.

⭐ View on GitHubEvery architecture review I've ever sat through follows the same pattern. Someone shares a diagram in Confluence. A cloud architect — usually me — opens it, scans for the obvious issues, and writes a list of comments. Single AZ deployment. No WAF. Missing caching layer. The same class of problems, week after week.

Manual review isn't bad because architects are bad at it. It's bad because it's slow, it doesn't scale, and the quality depends entirely on who's in the room that day. A junior team shipping without a dedicated reviewer gets nothing. A senior team gets feedback three days after the diagram was drawn.

I wanted a first pass that happens instantly — before any human is involved. So I built one.

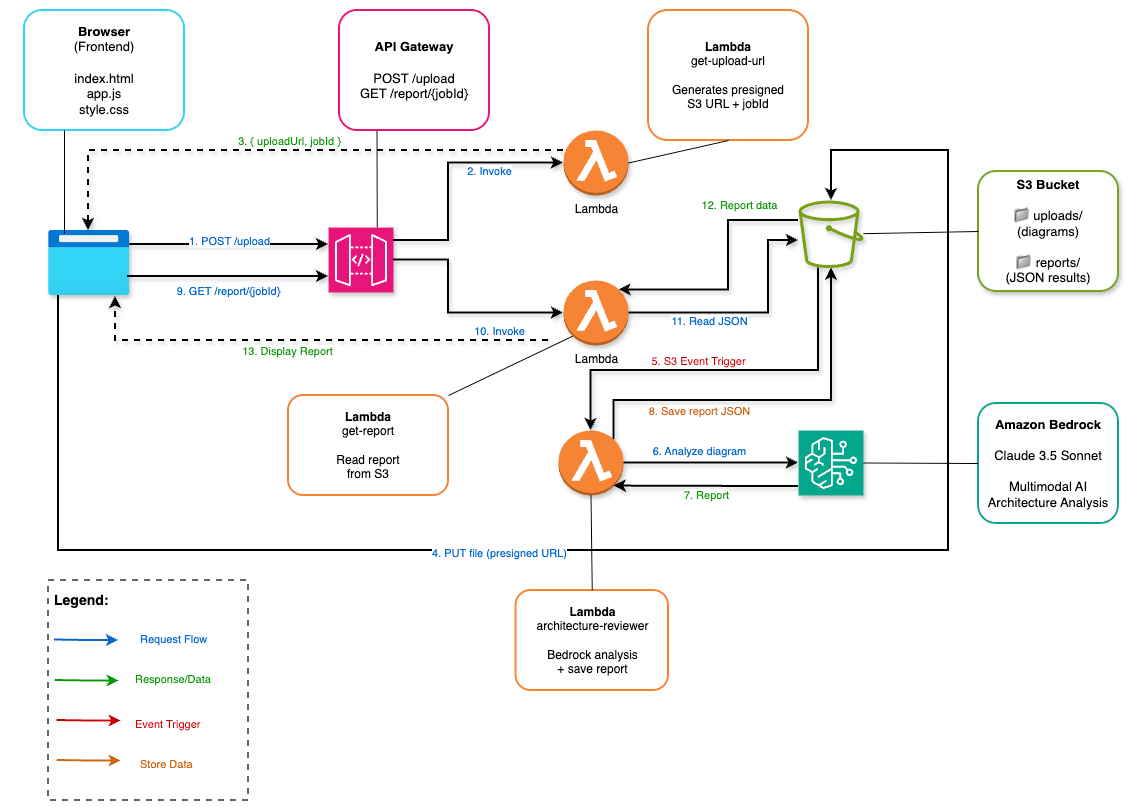

How It Works

The premise is simple: upload an architecture diagram, get back a structured Well-Architected Framework review. No account, no API key, no consultant. The AI identifies AWS services in the image, evaluates them against all six WAF pillars, scores each one, and generates a prioritized list of issues and recommendations.

The application itself is entirely serverless. S3, Lambda, API Gateway, Bedrock. No EC2, no containers, no infrastructure to babysit.

The flow in 13 steps:

- Browser sends POST /upload to API Gateway

- Lambda (get-upload-url) generates a presigned S3 URL and a jobId

- Browser receives { uploadUrl, jobId }

- Browser uploads the diagram directly to S3 via presigned URL (PUT)

- S3 fires an event trigger to the reviewer Lambda

- Reviewer Lambda sends the image to Amazon Bedrock (Claude 3.5 Sonnet)

- Bedrock returns the analysis report

- Report saved as JSON to reports/{jobId}.json in S3

- Browser polls GET /report/{jobId}

- API Gateway invokes the get-report Lambda

- Lambda reads the JSON from S3

- Report data returned to browser

- UI renders the full review

No websockets, no queues — intentionally. Polling every 3 seconds for 30 seconds is simple, predictable, and requires zero additional infrastructure.

The Frontend

The upload interface is a plain HTML/CSS/JS application with no framework dependencies. Drag and drop, file picker, or both. The presigned URL pattern means the file never touches the Lambda function — it goes from the browser straight to S3. This keeps Lambda fast and the architecture clean.

// 1. Get presigned URL and jobId

const { uploadUrl, jobId } = await fetch(`${API}/upload`, {

method: 'POST',

body: JSON.stringify({ fileType: file.type })

}).then(r => r.json());

// 2. Upload directly to S3

await fetch(uploadUrl, { method: 'PUT', body: file });

// 3. Poll for the report

const report = await pollReport(jobId);Polling runs every 3 seconds with a 3-minute timeout. In practice, Bedrock analysis takes 20–30 seconds. The UI shows progress states — uploading, analyzing, rendering — so the wait doesn't feel empty.

The Backend

Three Lambda functions, each with one job.

get-upload-url — receives the upload request, generates a jobId (UUID), creates a presigned S3 PUT URL valid for 5 minutes, returns both to the browser.

presigned_url = s3.generate_presigned_url(

'put_object',

Params={

'Bucket': BUCKET_NAME,

'Key': f'uploads/{job_id}.png',

'ContentType': 'image/png'

},

ExpiresIn=300

)

return {'uploadUrl': presigned_url, 'jobId': job_id}architecture-reviewer — triggered by S3 event when a file lands in uploads/. Fetches the image, encodes it as base64, sends it to Bedrock with the analysis prompt, saves the JSON report to reports/{jobId}.json.

response = bedrock.invoke_model(

modelId="anthropic.claude-3-5-sonnet-20241022-v2:0",

contentType="application/json",

accept="application/json",

body=json.dumps({

"anthropic_version": "bedrock-2023-05-31",

"max_tokens": 4096,

"messages": [{

"role": "user",

"content": [

{

"type": "image",

"source": {

"type": "base64",

"media_type": "image/png",

"data": base64_image

}

},

{"type": "text", "text": ANALYSIS_PROMPT}

]

}]

})

)get-report — reads reports/{jobId}.json from S3 and returns it. Returns {"status": "pending"} if the file doesn't exist yet. The browser handles the rest.

The Prompt That Makes It Work

A generic "analyze this AWS architecture" prompt produces generic output. The breakthrough is forcing the model into a rigid JSON schema with specific fields for each WAF pillar, severity levels it must choose from, and explicit scoring criteria.

You are an AWS Well-Architected Framework expert. Analyze the provided

AWS architecture diagram and return ONLY a valid JSON object.

Score each pillar 1-5:

1 = Critical gaps 2 = Poor 3 = Adequate 4 = Good 5 = Excellent

For each issue, classify severity as: CRITICAL, HIGH, MEDIUM, or LOW

For each recommendation, classify priority as: HIGH, MEDIUM, or LOW

Return this exact structure:

{

"summary": "...",

"overallRisk": "LOW|MEDIUM|HIGH|CRITICAL",

"pillarScores": { ... },

"issues": [ ... ],

"recommendations": [ ... ],

"wellArchitectedRisks": [ ... ],

"detectedServices": [ ... ],

"positives": [ ... ]

}Enforcing JSON output does two things: it makes parsing reliable, and it forces the model to commit to structured judgments rather than hedging with prose. The severity classification alone dramatically improves signal quality — reviewers know immediately what to fix first.

It Actually Works

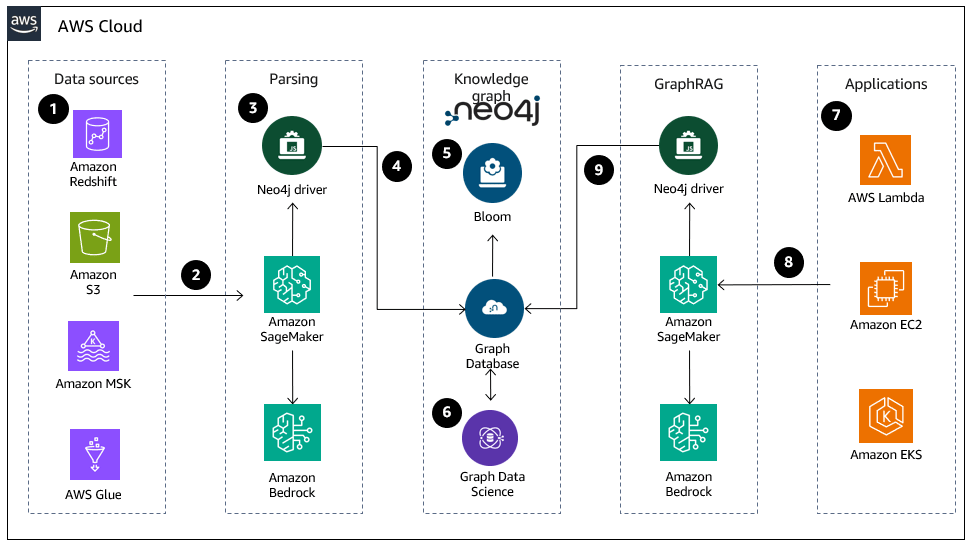

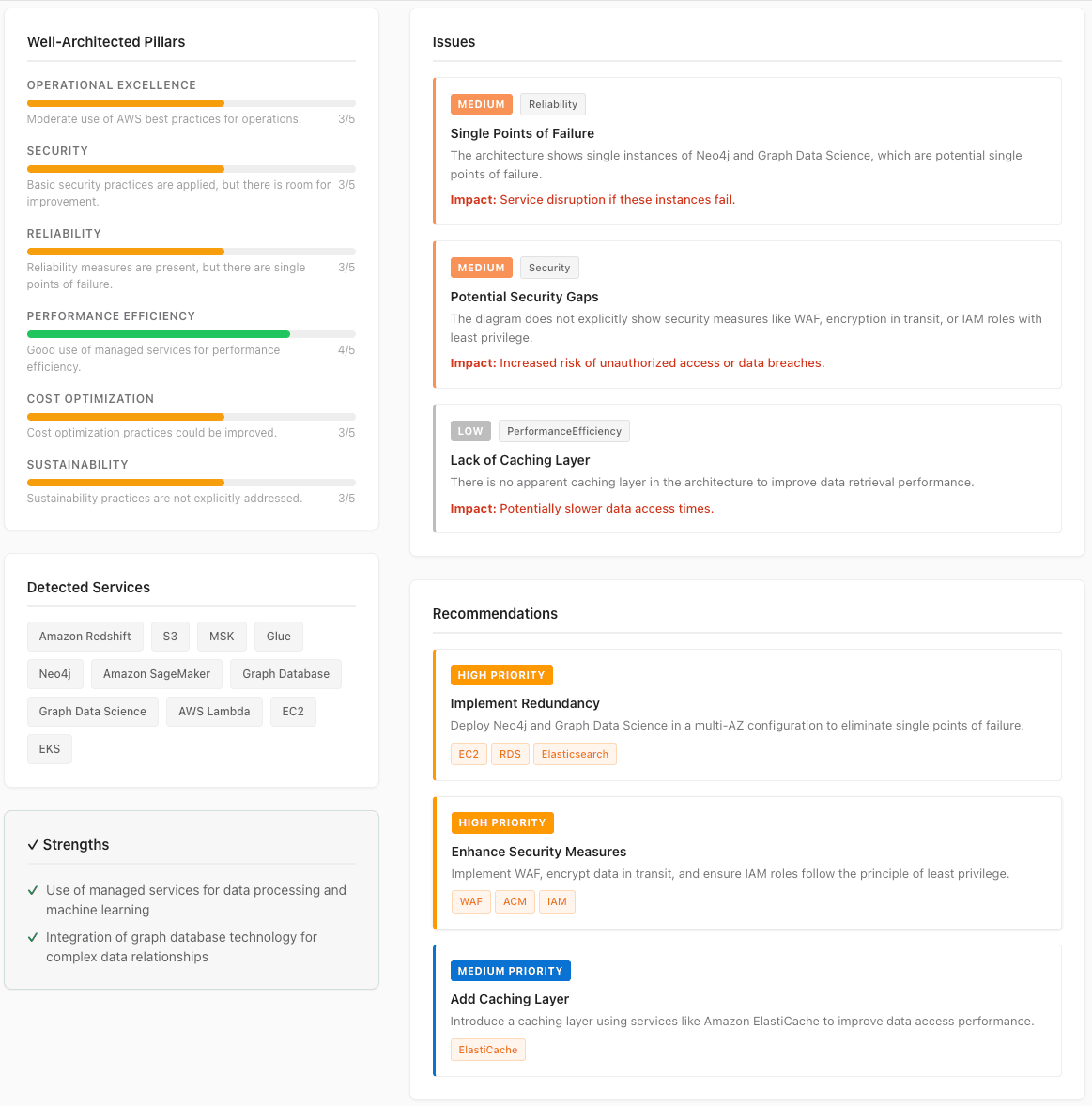

I tested it with a real architecture: the Knowledge Graphs and GraphRAG reference architecture from AWS, running Neo4j on AWS Cloud with SageMaker, Bedrock, Lambda, EC2, and EKS.

Within 25 seconds, the reviewer returned a complete report.

The model correctly identified all 10 services in the diagram. It scored Performance Efficiency at 4/5 (the managed services genuinely do that well) and flagged three real issues:

- Single Points of Failure — Neo4j and Graph Data Science with no redundancy (MEDIUM / Reliability)

- Potential Security Gaps — no WAF, no encryption in transit shown, no IAM least privilege documented (MEDIUM / Security)

- Lack of Caching Layer — no ElastiCache or equivalent for data retrieval (LOW / Performance Efficiency)

All three are legitimate observations about this reference architecture. The model did not hallucinate services, did not invent problems, and correctly recognized the strengths: managed services for ML workloads, graph database integration for complex relationships.

The Bedrock Gotcha: Multimodal Requires us-east-1

The first version pointed at eu-central-1. Claude 3.5 Sonnet is available there — but not the multimodal version that can process images. The call failed with:

ValidationException: The provided model identifier is invalid

Claude 3.5 Sonnet vision capability is available in us-east-1 and us-west-2. If your deployment region is different, you either cross-region invoke or move the Lambda to one of those regions. I moved the reviewer Lambda to us-east-1. API Gateway stays wherever the user is — latency on the Bedrock call dominates anyway, not the API hop.

Second gotcha: the S3 event trigger and the Lambda function must be in the same region. The S3 bucket moved to us-east-1 along with the Lambda. If you're running this in a multi-region setup, account for this in your Terraform variable configuration.

IAM — Scoped to the Exact Model

Same principle as my Terraform reviewer: never bedrock:*. The Lambda role gets permission to invoke exactly one model.

data "aws_iam_policy_document" "bedrock_access" {

statement {

effect = "Allow"

actions = ["bedrock:InvokeModel"]

resources = [

"arn:aws:bedrock:us-east-1::foundation-model/${var.bedrock_model_id}"

]

}

}The S3 policy is split into two statements: PutObject on uploads/ prefix only for the presigned URL Lambda, GetObject on uploads/ and PutObject/GetObject on reports/ for the reviewer Lambda. No Lambda has broader bucket access than it needs.

Cost

For 1,000 architecture reviews per month:

| Service | Monthly cost |

|---|---|

| Bedrock — Claude 3.5 Sonnet | ~$18.00 |

| Lambda (3 functions) | ~$0.20 |

| S3 (storage + requests) | ~$0.05 |

| API Gateway | ~$0.04 |

| CloudWatch Logs | ~$0.01 |

| Total | ~$18.30 |

That's $0.018 per review. A junior cloud architect's hourly rate in DACH is €60–80. Even a 5-minute manual review costs more than 16 AI reviews at this rate. For a team running weekly architecture reviews across multiple projects, the ROI is immediate.

Deploy It Yourself

# Clone and initialize

git clone https://github.com/romanceresnak/aws-architecture-reviewer

cd aws-architecture-reviewer

make init

# Preview infrastructure

make plan

# Plan: 25 to add, 0 to change, 0 to destroy.

# Deploy everything

make deploy

# Get your endpoints

make output

# api_url = "https://abc123.execute-api.us-east-1.amazonaws.com/dev"

# upload_endpoint = ".../dev/upload"

# report_endpoint = ".../dev/report/{jobId}"Before deploying: enable Claude 3.5 Sonnet in Amazon Bedrock console → Model access → Request access. Approval takes about 2 minutes.

Open frontend/index.html in your browser. No hosting required for testing — the presigned URL pattern works from file://.

Cleanup when you're done:

make destroy

aws s3 rb s3://BUCKET_NAME --force # if bucket is non-emptyWhat's Next

- Authentication — Cognito user pools or API key gating. Currently the API endpoint is open.

- PDF and draw.io support — the Lambda can already convert formats before sending to Bedrock; the frontend just needs the upload MIME type handling

- Diff reviews — compare two architecture versions and report what changed in WAF terms

- GitHub Actions integration — trigger a review on every architecture diagram commit, post results as PR annotations

- PDF report export — Lambda generates a formatted PDF using ReportLab; download link in the UI

The architecture is intentionally minimal. Three Lambdas, one S3 bucket, one API Gateway. Adding features means adding prompts and Lambda logic — not redesigning the infrastructure.

Final Thoughts

The Well-Architected Framework has been public since 2015. The knowledge is there. Getting it applied consistently to every diagram, every team, every sprint — that's the problem. Automation doesn't replace the architect. It eliminates the gap between "diagram exists" and "diagram has been reviewed."