I Built an AI That Reviews Terraform Pull Requests Automatically

Every time a developer opens a PR, an AI catches security issues, missing best practices, and cost problems — before any human reviewer sees the code.

Every team working with Terraform at scale eventually hits the same wall. Pull requests pile up. Reviewers are busy. Security misconfigurations slip through. Someone deploys an S3 bucket without encryption, a security group with 0.0.0.0/0 on port 22, or an RDS instance with no deletion protection. Not because the team is careless — but because manual infrastructure review is tedious, inconsistent, and slow.

I've been that reviewer. Spending 30 minutes on a PR catching the same class of issues I caught the week before. So I built a system where the first reviewer isn't a human at all.

romanceresnak/bedrock-terraform-pr-review

Terraform modules + Lambda + Bedrock · fully deployable

★ View on GitHubArchitecture

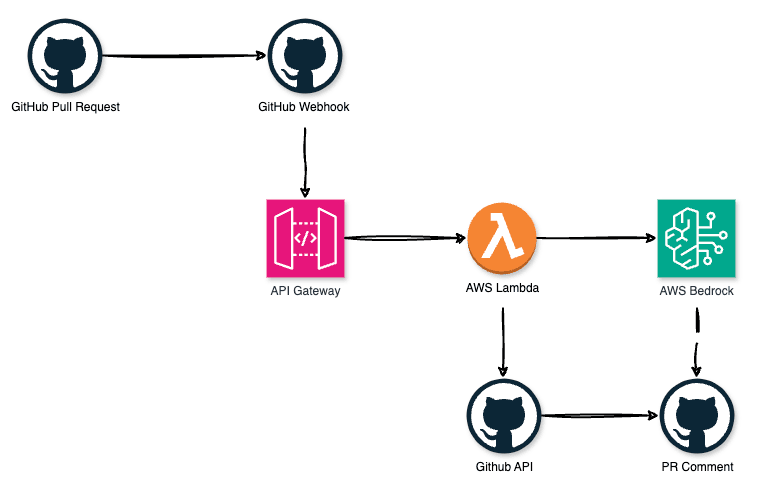

The system is entirely serverless. No EC2, no containers, no infrastructure to manage. When a developer opens or updates a pull request, GitHub fires a webhook. Lambda picks it up, fetches the diff, sends it to Amazon Bedrock, and posts the review as a PR comment. End-to-end in under 10 seconds.

Modular Terraform Infrastructure

The entire setup is codified in four focused Terraform modules — each with a single responsibility, reusable across projects.

modules/

├── iam/ # execution role + least-privilege policies

├── secrets/ # GitHub token in Secrets Manager

├── lambda/ # function + deployment package

└── api_gateway/ # REST API + webhook endpointSecrets Manager — the lifecycle gotcha

The GitHub token is stored in Secrets Manager and retrieved at runtime — never in environment variables. The lifecycle.ignore_changes block is critical: after initial deployment, the token is updated via AWS CLI. Without this, every terraform apply would overwrite it with the placeholder.

resource "aws_secretsmanager_secret" "github_token" {

name = "${var.project_name}-github-token"

description = "GitHub PAT for PR reviews"

tags = var.tags

}

resource "aws_secretsmanager_secret_version" "github_token" {

secret_id = aws_secretsmanager_secret.github_token.id

secret_string = jsonencode({ token = "PLACEHOLDER" })

lifecycle {

ignore_changes = [secret_string] # managed outside Terraform

}

}IAM — scoped to exact model ARN

The Lambda role gets exactly three permissions. The Bedrock statement is scoped to the specific model ARN — not bedrock:*. This is the IAM pattern every Bedrock project should follow.

resource "aws_iam_role_policy" "lambda_bedrock" {

policy = jsonencode({

Statement = [{

Effect = "Allow"

Action = ["bedrock:InvokeModel"]

Resource = var.bedrock_model_arn # specific ARN, not wildcard

}]

})

}

resource "aws_iam_role_policy" "lambda_secrets" {

policy = jsonencode({

Statement = [{

Effect = "Allow"

Action = ["secretsmanager:GetSecretValue"]

Resource = var.github_token_secret_arn

}]

})

}API Gateway — circular dependency fix

Lambda needs the API Gateway execution ARN. API Gateway needs the Lambda invoke ARN. Classic circular dependency. The fix: pull aws_lambda_permission into the root module with explicit depends_on.

# Defined in root module to break the circular dependency

resource "aws_lambda_permission" "api_gateway" {

statement_id = "AllowAPIGatewayInvoke"

action = "lambda:InvokeFunction"

function_name = module.lambda.function_name

principal = "apigateway.amazonaws.com"

source_arn = "${module.api_gateway.execution_arn}/*/*"

depends_on = [module.lambda, module.api_gateway]

}The Heart: Prompt Engineering

The Lambda orchestration is standard — fetch diff, call API, post comment. The interesting part is what you ask the AI. A generic "Review this Terraform code" prompt produces vague, useless output. The breakthrough came from enforcing structure, severity levels, and requiring concrete fix examples in the response.

def build_prompt(diff: str, pr_title: str) -> str:

return f"""You are a senior AWS DevOps engineer reviewing

a Terraform pull request.

PR Title: {pr_title}

Focus on:

1. Security - unencrypted storage, open security groups,

overly permissive IAM, missing KMS

2. AWS best practices - missing tags, no deletion protection,

no versioning, hardcoded values

3. Cost - overprovisioned instances, missing lifecycle rules

Format:

- One-sentence summary of the change

- Issues: Critical / Warning / Suggestion

- For each issue: a concrete code fix

- Final verdict: Approve / Approve with comments / Request changes

If the code is clean, say so. Do not invent issues.

Diff:

{diff}

"""Why temperature: 0.3?

Higher temperatures produce creative, varied responses — good for writing, bad for code review. At 0.3, each review is unique but consistently structured. Predictable output format matters more than creativity here.

It Actually Works

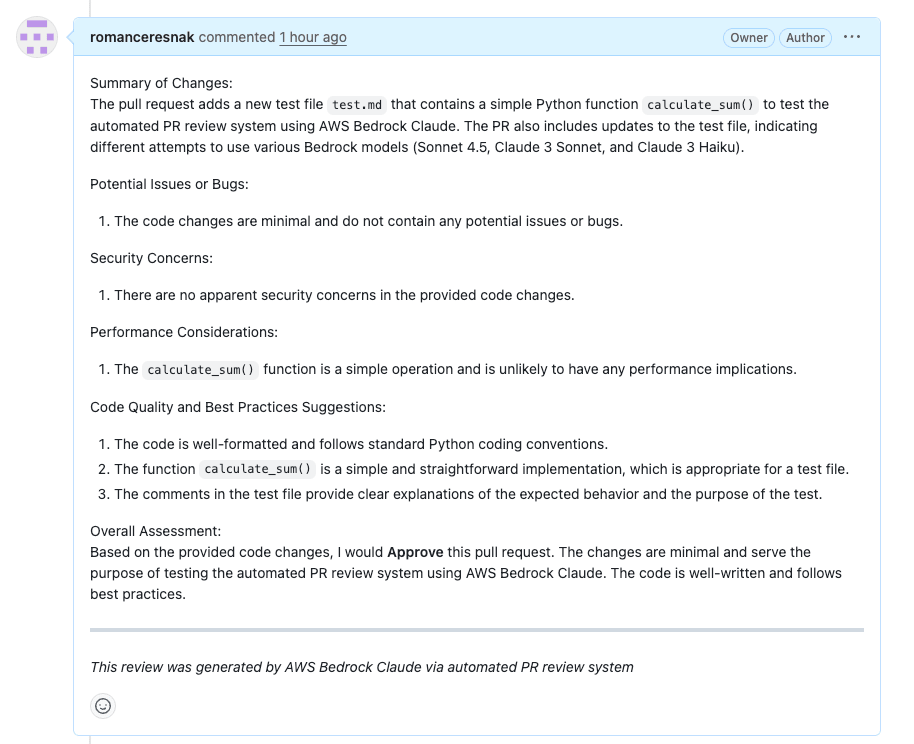

Here is the real test. I opened a pull request in the repository to verify the system end-to-end — a simple test file to confirm the full pipeline from webhook to PR comment.

Opening a test PR: "Testing automated PR review using AWS Bedrock Claude"

Within seconds of opening the PR, the AI automatically posted its review as a comment. No manual trigger, no webhook test button — just the normal GitHub PR flow.

The review correctly identified that the test code had no bugs, provided structured analysis across all five categories, and concluded with Approve. It does not hallucinate problems that are not there — a direct result of the explicit prompt instruction.

As you can see from the actual review, Claude 3 Haiku analyzed:

- Summary of Changes: Accurately described the PR's purpose

- Potential Issues or Bugs: Confirmed no issues found

- Security Concerns: No security concerns detected

- Performance Considerations: Assessed the simple operation

- Code Quality and Best Practices: Validated formatting and conventions

- Overall Assessment: Approve — with clear reasoning

The Bedrock Gotcha That Cost Me Hours

Model availability varies by region. My initial config used anthropic.claude-3-5-sonnet-20241022-v2:0 which failed with "The provided model identifier is invalid" in eu-west-1. Then Claude Sonnet 4.5 threw a different error:

ValidationException

Invocation of model ID with on-demand throughput isn't supported. Retry with an inference profile.

Newer models require inference profiles instead of direct model IDs. I settled on Claude 3 Haiku — available in eu-west-1, supports ON_DEMAND throughput, and is 12x cheaper than Sonnet at scale. For automated code review, the quality difference is negligible.

Also: the legacy Bedrock completions format fails silently. The Messages API is required.

# ❌ wrong - legacy format

body=json.dumps({

"prompt": prompt,

"max_tokens": 500

})

# ✅ correct - messages api

body=json.dumps({

"anthropic_version": "bedrock-2023-05-31", # required field!

"max_tokens": 4000,

"temperature": 0.3,

"messages": [{

"role": "user",

"content": prompt

}]

})Cost

For a team making 100 PRs/month, total infrastructure cost is around $9-13/month. Compare this to 25 hours of saved review time — the ROI case writes itself.

| Service | Monthly cost |

|---|---|

| Lambda (512MB, ~10s avg) | ~$0.20 |

| API Gateway | ~$0.01 |

| Bedrock — Claude 3 Haiku | ~$8-12 |

| Secrets Manager | ~$0.40 |

| CloudWatch Logs | ~$0.50 |

| Total | ~$9-13/month |

Deploy It Yourself

- Clone the repo and run

./build_lambda.shto package the function - Run

terraform init && terraform apply - Set your token via

aws secretsmanager put-secret-value - Copy the webhook URL from

terraform output api_gateway_endpoint - Add the webhook to GitHub: Settings → Webhooks → Pull requests

- Open a PR and watch the review appear

What's Next

- Webhook signature validation (

X-Hub-Signature-256) — currently anyone with the URL can trigger reviews - Smarter diff filtering — skip lockfiles, auto-generated code, non-Terraform files

- Inline PR comments — post feedback on specific lines instead of a general comment

- Multi-repo support — one deployment reviewing PRs across multiple repositories

The implementation is simpler than it sounds. A webhook, a Lambda, a Bedrock call, a GitHub comment. The interesting work is in the prompt — structuring the AI output so engineers actually act on it.

What makes this pattern powerful is extensibility. The same architecture works for CloudFormation, Kubernetes YAML, CDK stacks. Change the prompt, not the pipeline.

romanceresnak/bedrock-terraform-pr-review

Clone, deploy, and have AI reviewing your PRs this afternoon.

★ Star on GitHub